In May 1999, I took architecture/sculpture course titled “Idiosyncratic Apparatus” (Sue Reese and Donald Sherifkin). I was interested in visualizing the paths traced out by individuals as they moved through spaces. I wanted to make the architectural concept of the “program of circulation” concrete and measurable. I was also curious what might be revealed about the social psychology of individual interaction if there was a methodology for precisely recording the positions and velocities of individuals in relation to each-other and the environment they were moving through.

In May 1999, I took architecture/sculpture course titled “Idiosyncratic Apparatus” (Sue Reese and Donald Sherifkin). I was interested in visualizing the paths traced out by individuals as they moved through spaces. I wanted to make the architectural concept of the “program of circulation” concrete and measurable. I was also curious what might be revealed about the social psychology of individual interaction if there was a methodology for precisely recording the positions and velocities of individuals in relation to each-other and the environment they were moving through.

Would it be possible to construct theories of social interaction from these trajectories the same way physicists used trails etched in bubble chambers to determine properties of elemental particles? The result was a prototype art/sociology/technology piece which attempted to visualize the trajectories in space and time traced out by theatergoers in “Newman Court”, a lobby outside of a theater space at Bennington college. The project built upon previous work I did with Ruben Puentedura on object motion tracking in video. Since then there has been very interesting work done by Dirk Helbing et al. on the physics of moving people.

I set up a camera on a balcony and digitized video footage of people waiting in Newman Court to enter a drama performance. Using a macro I wrote for Object Image (a medical image processing program, now called ImageJ ) I extracted the pixel location of the feet of each person in each frame. This information was recorded along with the frame number to give coordinates in time and space.

Because the camera was not directly above and orthogonal to the floor, I needed to transform the recorded pixel data to remove the distortions of perspective. To do this I located calibration points in the image which I could compare to measured positions in the space. Physics professor Norm Derby provided me with a set of translation equations to transform the video coordinates back into real space using the calibration points – essentially projecting the screen points onto the plane of the floor.

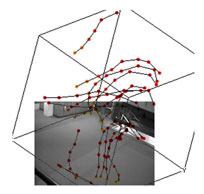

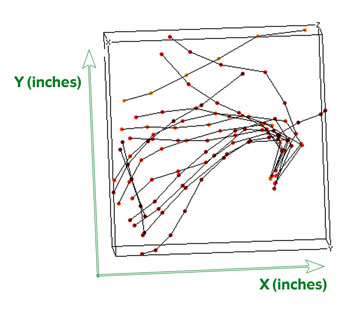

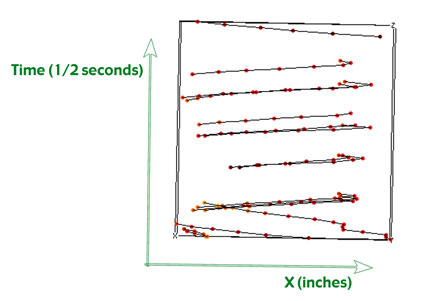

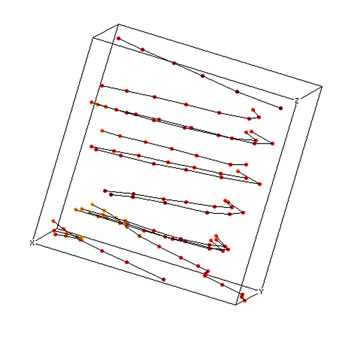

I wanted to get a picture of the “phase space” of the data. I used a 3D plotting program to visualize the time and space components of people’s movement as traces in a three dimensional cube. In these diagrams the X and Y coordinates correspond to the X and Y dimensions of Newman Court, but the Z dimension of each point indicates the amount of time which has elapsed since the beginning of the film.

If the cube is rotated so that the view is from ‘above’, the time information is collapsed. The traces of each person’s motion appear in plan view and the patterns of traffic in the space become visible. Most of the trails converge on the stairs down into the theater.

With a side view, motion along the X-axis is plotted against time. From this perspective the slope of each line indicates the person’s velocity and the direction they were moving.

If the cube is viewed at an angle where some of all three dimensions are visible, relationships appear which are more difficult to see in strict plane views. In this diagram it is possible to pick out the lines corresponding to individuals who were moving in a group because they show fairly strict parallelism in space and time.

(qt video clip)

The video clip from which the data was extracted is fairly simplistic and uninteresting and mostly serves to test the concept. At some point I’d like to develop this into a usable methodology.For the class, I just presented a few of these examples, the data in the 3D computer software (so people could spin it to view from all angles), and physically mapped all the points onto the floor of the lobby to provide a very concrete record of the paths.

Saw a reference to a neat dutch project Amsterdam Realtime that used GPS to track positions of individuals moving through the city. Nice movies of the trajectories over time. When they are overlayed, you see the map of the city: http://realtime.waag.org/

Also saw at one point a recent project mapping paths of people at an exhibition, but now can only find these images: http://www.ccon.org/conf02/event-tracking/

and here is another similar project:

http://www.pedestrianlevitation.net/process.html

Actually saw this live at the Hall of Science in NY. Works pretty well. Tracks the paths of visitors to the exhibit. sometimes guesses wrong and acts like the walked through walls.

http://www.snibbe.com/scott/public/youarehere/index.html

Also, via visualcomplexity, the “space syntax” project:

http://www.visualcomplexity.com/vc/project_details.cfm?id=692&index=692&domain=

and now a company that is marketing this for use in shopping:

http://www.technologyreview.com/computing/39552/?p1=A1